Yuber wrote:How does the Rift's refresh rate work(2 rates, 1 for each eye?), and what's its native refresh rate? VR@120fps+ would probably blow my mind. I forgot the name of the tech some newer LCD monitors use to prevent motion blur, but I'm curious how well it works. CRTs are still king in that regard. My 1080p monitor is ancient in PC terms, and it's got pretty bad motion blur. It's especially annoying when I play platformers. I can still play them, but motion blur is a huge eyesore.

The rift is 1 screen, displaying 2 view ports, which are aligned to two lenses. Right now, the screen is 640p at 60 hz. The HD devkit they're using is 1080p at 60 hz. They've hinted that they're considering something like a 1440p screen at either 60 or 120 hz, and that the goal ultimately is to arrive around 4k with 120 hz, and the ultimate dream is 8k at 240 hz.

A word about framerates and motion tracking: At this point, I think there needs to be a distinction among types of tracking, though. The term "motion controls" is so misleading and limited. Motion controls really refers to gesture input - a system reading a motion and trying to interpret that into an activator for an action. The most obvious type of motion input is waggle - moving a controller up and down so that the system reads that gesture and maps it to a virtual button press. You waggle in Mario Galaxy to punch, for example. Your motion to punch in mario galaxy isn't even close to what is represented on screen.

People sometimes say 1:1 motion controls, which is closer to what this type of tracking is. The better, more accurate, and emerging term for this type of tracking is positional tracking. What this means is that instead of trying to create a gesture and having the computer map that gesture into a pre-canned action, the computer instead reads where and to what orientation your extremity is at and maps it to a virtual object. It's important to clarify between orientation and position - orientation is pitch, yaw, and rotation among a vector. Think back to trig - a vector differs from a vertex in that a vector contains direction. You display orientation in vectors. Position, by contrast, is displayed as vertex, a single point in X, Y, Z space.

Positional tracking takes both of these into account. By nature, things which are positionally tracked don't need gesture tracking. If the computer can figure out which way you're holding a virtual sword, what the sword's vector is (pitch, yaw, rotation) among a vertex (the handle being held by your hand at X, Y, and Z) and can refresh its position 60 times a second, as accurate to your real world position, then it doesn't need to cross a threshhold before the computer recognizes the gesture and begins an attack animation. Simply swinging the sword IRL will be enough to attack.

Gesture tracking is imprecise and laggy by nature. Old motion controllers for last gen systems were slow and had limitations. The Wiimote could do positional tracking, but only in a very narrow field of view, and only when the orientation of the controller was such so that the camera on the controller could see the sensors in front of it. When you held the wiimote and pointed it at the screen, it had pretty accurate positional tracking - using the 2 IR LEDs it could map the rotation of the wiimote accurately, the distance (Z) from the TV accurately, the height from the TV (Y) accurately, and the horizontal distance of the TV (X) accurately. It couldn't map pitch and yaw very accurately - those were gleamed from a relatively starting position via accelerometers. Accelerometers work by counting the degree of change without regard to the point of origin. So an accelerometer reports back stuff like "from last poll, we moved +5 in pitch and -7 in yaw" without giving a frame of reference. The problem is, with this old motion control tech, these accelerometers were subject to drift, so that movement one way might report back +5 pitch, and the exact opposite movement backwards might incorrectly report back -4 pitch (instead of -5), meaning the tracking would very slowly, over time, get off.

When the wiimote wasn't pointing at the screen, it had to rely entirely on these accelerometers for both positioning and orientation, and that's where things got inaccurate. Without line of sight at the TV, the wiimote was very inaccurate and that's why devs would resort to gesture monitoring. If all they can read from the remote is rate of change, then the most complex motions they can read are stuff like waggles or waving motions.

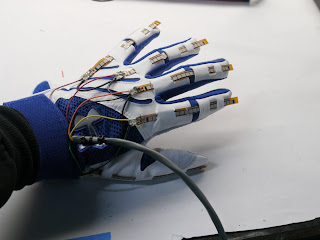

These new VR devices work differently. They all rely on gyros instead of accelerometers. Gyroscopes take into account a frame of reference, usually a magnetic source like the earth's poles. Using those as a frame of reference, you can get an accurate reading of the absolute positioning and orientation. The razer hydras and sixense stem are built off of this technology. They include a base which sends out magnetic pulses that acts as a central spot to orient these devices within a 6 cubic foot area (9 cubic foot on the STEM). That means that you can conceptualize a cube 9 foot by 9 foot by 9 foot big surrounding the base, and these trackers, within that cube, can get 1:1 directly tracked for position and direction, without any line of sight. That means no limitations, which means you don't have to rely on gesture monitoring. Everything is accurately tracked.

Getting back to the rift, it currently tracks orientation, but not position. But it will track position going forward. But even when it does, it won't have to rely on motion to be read. It's not you swinging your head, and the rift measuring the swing, and updating the camera in-game accurately. that's slow and laggy. Instead, it's the rift updating it's orientation 60 times a second, and the computer aligning the camera in-game to the orientation the rift reads. That's why latency is such a big deal.

To think of it a bit differently, think of the way the wiimote and previous gen motion trackers read motion as them reading acceleration, while the new motion trackers read position. That's glossing over a bunch of details, but it will help you conceptualize how these are different.

That said, the stigma about motion controls needs to go away. For all the good it did getting this tech into the limelight, the wii seems to have given people a terrible conception about what motion controls are. Less gesture, more absolute positioning. Next-gen motion controls (by which I mean the STEMS and not, like, Kinect, which is built off the same limitations as the original kinect in a lot of ways) will be a lot more accurate and work the way people imagined they would.